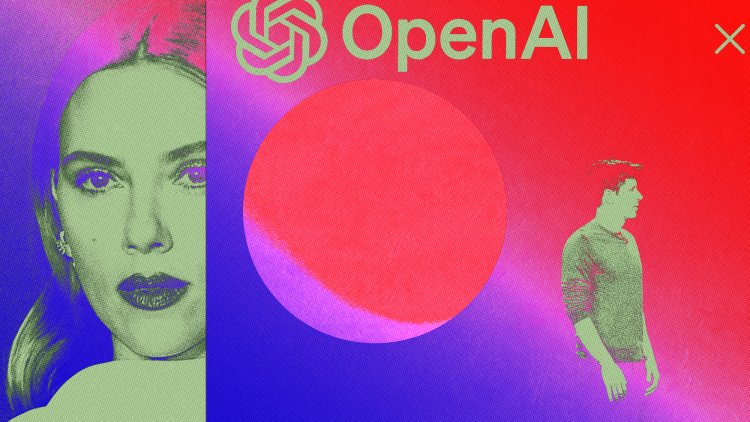

OpenAI’s Manifest Destiny

The Scarlett Johansson debacle is a microcosm of AI’s raw deal: It's happening, and you can't stop it.

If you’re looking to understand the philosophy that underpins Silicon Valley’s latest gold rush, look no further than OpenAI’s Scarlett Johansson debacle. The story, according to Johansson’s lawyers, goes like this: Nine months ago, OpenAI CEO Sam Altman approached the actor with a request to license her voice for a new digital assistant; Johansson declined. She alleges that just two days before the company’s keynote event last week, in which that assistant was revealed as part of a new system called GPT-4o, Altman reached out to Johansson’s team, urging the actor to reconsider. Johansson and Altman allegedly never spoke, and Johansson allegedly never granted OpenAI permission to use her voice. Nevertheless, the company debuted Sky two days later—a program with a voice many believed was alarmingly similar to Johansson’s.

Johansson told NPR that she was “shocked, angered and in disbelief that Mr. Altman would pursue a voice that sounded so eerily similar to mine.” In response, Altman issued a statement denying that the company had cloned her voice and saying that it had already cast a different voice actor before reaching out to Johansson. (I’d encourage you to listen for yourself.) Curiously, Altman said that OpenAI would take down Sky’s voice from its platform “out of respect” for Johansson. This is a messy situation for OpenAI, complicated by Altman’s own social-media posts. On the day that OpenAI released ChatGPT’s assistant, Altman posted a cheeky, one-word statement on X: “Her”—a reference to the 2013 film of the same name, in which Johansson is the voice of an AI assistant that a man falls in love with. Altman’s post is reasonably damning, implying that Altman was aware, even proud, of the similarities between Sky’s voice and Johansson’s.

On its own, this seems to be yet another example of a tech company blowing past ethical concerns and operating with impunity. But the situation is also a tidy microcosm of the raw deal at the center of generative AI, a technology that is built off data scraped from the internet, generally without the consent of creators or copyright owners. Multiple artists and publishers, including The New York Times, have sued AI companies for this reason, but the tech firms remain unchastened, prevaricating when asked point-blank about the provenance of their training data. At the core of these deflections is an implication: The hypothetical superintelligence they are building is too big, too world-changing, too important for prosaic concerns such as copyright and attribution. The Johansson scandal is merely a reminder of AI’s manifest-destiny philosophy: This is happening, whether you like it or not.

Altman and OpenAI have been candid on this front. The end goal of OpenAI has always been to build a so-called artificial general intelligence, or AGI, that would, in their imagining, alter the course of human history forever, ushering in an unthinkable revolution of productivity and prosperity—a utopian world where jobs disappear, replaced by some form of universal basic income, and humanity experiences quantum leaps in science and medicine. (Or, the machines cause life on Earth as we know it to end.) The stakes, in this hypothetical, are unimaginably high—all the more reason for OpenAI to accelerate progress by any means necessary. Last summer, my colleague Ross Andersen described Altman’s ambitions thusly:

As with other grand projects of the 20th century, the voting public had a voice in both the aims and the execution of the Apollo missions. Altman made it clear that we’re no longer in that world. Rather than waiting around for it to return, or devoting his energies to making sure that it does, he is going full throttle forward in our present reality.

Part of Altman’s reasoning, he told Andersen, is that AI development is a geopolitical race against autocracies like China. “If you are a person of a liberal-democratic country, it is better for you to cheer on the success of OpenAI” rather than that of “authoritarian governments,” he said. He noted that, in an ideal world, AI should be a product of nations. But in this world, Altman seems to view his company as akin to its own nation-state. Altman, of course, has testified before Congress, urging lawmakers to regulate the technology while also stressing that “the benefits of the tools we have deployed so far vastly outweigh the risks.” Still, the message is clear: The future is coming, and you ought to let us be the ones to build it.

Other OpenAI employees have offered a less gracious vision. In a video posted last fall on YouTube by a group of effective altruists in the Netherlands, three OpenAI employees answered questions about the future of the technology. In response to one question about AGI rendering jobs obsolete, Jeff Wu, an engineer for the company, confessed, “It’s kind of deeply unfair that, you know, a group of people can just build AI and take everyone’s jobs away, and in some sense, there’s nothing you can do to stop them right now.” He added, “I don’t know. Raise awareness, get governments to care, get other people to care. Yeah. Or join us and have one of the few remaining jobs. I don’t know; it’s rough.” Wu’s colleague Daniel Kokotajlo jumped in with the justification. “To add to that,” he said, “AGI is going to create tremendous wealth. And if that wealth is distributed—even if it’s not equitably distributed, but the closer it is to equitable distribution, it’s going to make everyone incredibly wealthy.” (There is no evidence to suggest that the wealth will be evenly distributed.)

This is the unvarnished logic of OpenAI. It is cold, rationalist, and paternalistic. That such a small group of people should be anointed to build a civilization-changing technology is inherently unfair, they note. And yet they will carry on because they have both a vision for the future and the means to try to bring it to fruition. Wu’s proposition, which he offers with a resigned shrug in the video, is telling: You can try to fight this, but you can’t stop it. Your best bet is to get on board.

You can see this dynamic playing out in OpenAI’s content-licensing agreements, which it has struck with platforms such as Reddit and news organizations such as Axel Springer and Dotdash Meredith. Recently, a tech executive I spoke with compared these types of agreements to a hostage situation, suggesting they believe that AI companies will find ways to scrape publishers’ websites anyhow, if they don’t comply. Best to get a paltry fee out of them while you can, the person argued.

The Johansson accusations only compound (and, if true, validate) these suspicions. Altman’s alleged reasoning for commissioning Johansson’s voice was that her familiar timbre might be “comforting to people” who find AI assistants off-putting. Her likeness would have been less about a particular voice-bot aesthetic and more of an adoption hack or a recruitment tool for a technology that many people didn’t ask for, and seem uneasy about. Here, again, is the logic of OpenAI at work. It follows that the company would plow ahead, consent be damned, simply because it might believe the stakes are too high to pivot or wait. When your technology aims to rewrite the rules of society, it stands that society’s current rules need not apply.

Hubris and entitlement are inherent in the development of any transformative technology. A small group of people needs to feel confident enough in its vision to bring it into the world and ask the rest of us to adapt. But generative AI stretches this dynamic to the point of absurdity. It is a technology that requires a mindset of manifest destiny, of dominion and conquest. It’s not stealing to build the future if you believe it has belonged to you all along.

What's Your Reaction?